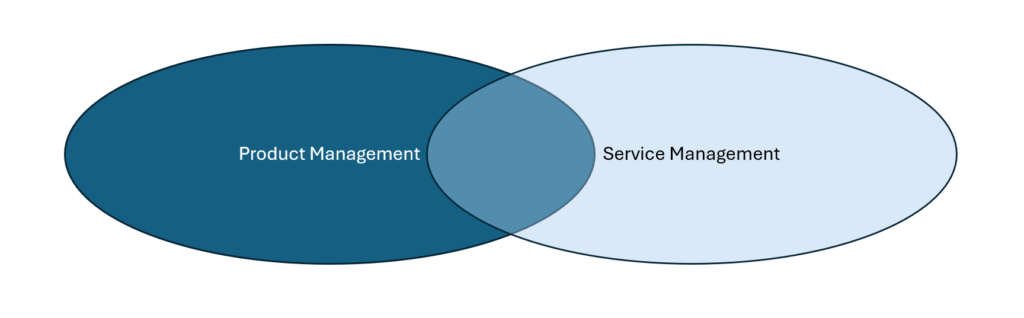

With the imminent release of ITIL® Product (Gray et al, 2026), PeopleCert has expanded the scope of traditional Service Management by combining Digital Product and Service Management. While product concepts are present throughout all of the new books, ITIL® Product reframes product development as an end‑to‑end lifecycle that runs from discovering opportunities to operating and continually improving digital products and services, rather than as a narrow “build” phase inside projects. This mirrors the historical shift from early brand management, where a single manager was accountable for a product’s commercial performance over time, to modern product management, which owns outcomes across discovery, design, delivery, and operations. By explicitly structuring the lifecycle into activities such as Discover, Design, Acquire, Build, Transition, and Operate, ITIL® Product codifies the same holistic view that has gradually emerged in the history of product development as organizations moved away from one‑off project thinking toward continuous product stewardship.

A second big idea is the strong emphasis on aligning product work with vision, strategy, and portfolio, which reflects the evolution from “feature factories” to outcome‑driven product organizations. In the Discover and Design chapters, ITIL® Product repeatedly stresses the need to understand context and inputs, agree direction and objectives, and ensure that product roadmaps stay anchored in organizational strategy and value creation. Historically, this echoes the move from reactive, sales‑driven roadmaps toward strategic product management, where decisions are guided by portfolio trade‑offs, positioning, and long‑term customer value rather than just near‑term delivery capacity.

ITIL Product® also embeds cross‑functional collaboration and agile ways of working as core success factors, which parallels the industry’s shift from siloed development and operations to integrated product teams. The Build and Operate chapters highlight critical success factors such as strong collaboration between engineering, product management, design, and quality assurance; agile cadences; automated CI/CD pipelines; and incremental delivery with feature toggles and safe rollouts. These practices track closely with the historical rise of Agile, DevOps, and empowered product teams that own the full lifecycle, breaking down the old handoffs between development, operations, and service management.

Another major theme is the product‑and‑service value chain: ITIL® Product systematically ties product decisions to the realities of cloud sourcing, service providers, and operational environments, including activities like acquiring cloud services, planning transitions, and coordinating responsibilities between vendors and service providers. This reflects how modern product development history has moved from shipping boxed software or discrete projects to operating live digital services in complex ecosystems. As organizations adopted SaaS, cloud platforms, and managed services, product management had to expand its remit to include sourcing, deployment, and long‑term operability—precisely the terrain ITIL® Product formalizes.

Finally, the book’s focus on metrics and continuous improvement—such as delivery velocity, cycle time, defect leakage, and team health—as part of each lifecycle activity reflects the historical maturation of product development into a data‑driven discipline. ITIL® Product treats these measures and their associated “critical success factors” as integral to managing products effectively, not as optional reporting after the fact. This aligns with the broader trajectory from intuitive, craft‑driven product development to modern product operations and analytics practices, where teams continuously learn from production feedback and telemetry to refine products and processes over time. ITIL® Product is definitely a contribution to the field and connects to the broader concepts of ITIL® nicely.

#ProductManagement #ITSM #PeopleCert

Bibliography

Gray, V., Konageski, W., & McDonald, S. (2026). ITIL Product [Book]. PeopleCert.